Are AI Storybooks Safe for Kids?

Parents are right to ask this question first.

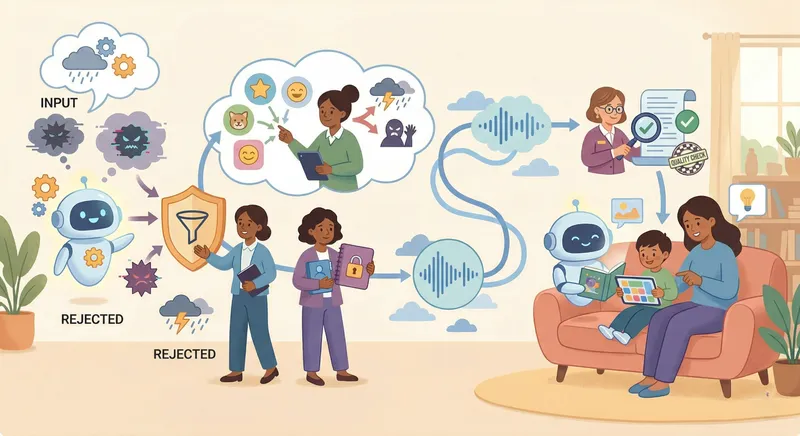

AI can make children’s stories feel more personal, more flexible, and more engaging. But “AI-powered” is not a safety standard by itself. What matters is how the system is designed, what boundaries it uses, how it handles sensitive topics, and whether it treats the child as someone who needs care rather than just more content. MIBOOKO explains this approach in plain language on its Methodology & Safety page, including age-appropriateness, content boundaries, privacy, and quality.

A good answer is not “yes, all AI storybooks are safe.” That would be too vague and too easy. The better answer is this: AI storybooks can be safe for kids when they are built inside clear child-aware frameworks.

That means guided storytelling instead of uncontrolled output. It means age-appropriate language and emotional pacing. It means content boundaries, privacy-by-design, optional photo-free personalization, and a parent-first approach to difficult topics. MIBOOKO’s public safety page also takes the more credible position that no system can promise perfect outcomes in every case.

Why this question matters

Children do not experience stories the way adults do.

A child may absorb the tone of a story before fully understanding the plot. They may remember how a scene felt even if they cannot explain it clearly. That is why safety in children’s storytelling is not only about blocking obvious harm. It is also about making sure the language, intensity, conflict, and resolution fit the child’s stage and emotional needs.

This matters even more in personalized storytelling. When a child sees themselves in the story, the experience can feel more meaningful, more emotionally engaging, and easier to remember. If you want the evidence behind that, MIBOOKO’s Research page explains how personalization can support attention, emotional engagement, literacy, empathy, confidence, and parent-child bonding.

What makes an AI storybook safer for children?

A safer AI storybook usually has five things in place.

1. Age-appropriate story design

Safe children’s storytelling starts with structure.

The vocabulary should fit the child’s age. The emotional tone should stay within a range that feels manageable. Conflict should be understandable, and the resolution should feel secure rather than chaotic or frightening. A good child-focused system does not just “write for kids.” It shapes the story so the child can process it safely. MIBOOKO’s Methodology & Safety page describes this in terms of age-appropriate language, themes, intensity, and quality checks for tone, structure, and consistency.

That includes:

- language the child can follow

- emotional intensity that does not overwhelm

- clear choices and clear outcomes

- calm, constructive resolution

2. Clear content boundaries

This is one of the biggest differences between a child-focused story system and a general AI tool.

A safer AI storybook needs strict boundaries around harmful material. That includes sexually explicit content, hate or discriminatory material, graphic violence, instructions for wrongdoing, and content that encourages self-harm or harm to others. Without those boundaries, “personalized” can quickly become unpredictable. MIBOOKO publicly says it uses content boundaries intended to reduce those kinds of outputs.

Parents should expect those limits to exist before they ever trust a child-facing AI product.

3. Safety-first handling of sensitive topics

Some topics are part of childhood, but they still need careful handling.

Bullying, exclusion, worries, frustration, grief, and fear should not be treated casually. A safer story system does not simply drop those themes into a story for dramatic effect. It handles them with calm language, avoids graphic or distressing detail, and shifts the focus toward safe choices, trusted adults, reassurance, and repair. For parents who want the practical, topic-based reading routes behind that safety approach, the Skills & Challenges hub is the best place to start.

That is a much stronger approach than either extreme: pretending difficult topics do not exist, or letting AI improvise its way through them.

4. Privacy by design

Safety is not only about what appears in the story. It is also about how much a family is expected to share.

A child-focused product should ask for only what genuinely helps create the story. That usually means light story preferences, a first name or nickname, an age range, and optional personalization details. It should not pressure families to upload a child photo or provide unnecessary sensitive information just to unlock a good result. MIBOOKO’s safety page explicitly frames photo-based personalization as optional and says unnecessary sensitive details should not be required for a strong story experience.

A safer system makes optional fields truly optional and gives families ways to personalize with less exposure, not more.

5. Quality checks, not just generation

A safe story should also be a good story.

A result can be technically “not harmful” and still be poor for a child audience. It can be confusing, emotionally clumsy, repetitive, or badly paced. That is why quality matters here. Good quality control supports safety by improving clarity, tone, consistency, and coherence. MIBOOKO’s Methodology & Safety page describes quality checks focused on clarity, tone, structure, consistency, and age-appropriate resolution.

For children, a messy story is not just weaker. It can also feel unsettling or frustrating.

What parents should watch out for

If you are evaluating any AI storybook product, do not stop at the label.

“AI-powered” does not tell you whether the story is guided or fully open-ended, whether the system has topic boundaries, whether it protects family privacy, whether it limits emotional intensity, or whether it is honest about what it can and cannot do. Those are the questions that matter.

Another warning sign is when a product starts sounding like therapy, diagnosis, or professional mental-health support. Stories can help with calm routines, confidence, emotional understanding, and shared reading. But they should not be presented as a substitute for qualified help when a child needs real support. MIBOOKO’s safety page is careful on this point and explicitly says its stories are not a replacement for therapy or professional support.

How MIBOOKO approaches AI safety

MIBOOKO’s public trust pages point to a safety model built around guided personalization rather than unrestricted output.

Its approach focuses on age-aware story design, content boundaries, privacy-by-design, optional photo-free personalization, and quality checks. It also makes an important distinction that many brands avoid: no system can promise perfect results in every case. That kind of restraint makes the safety claim more credible, not less.

MIBOOKO also frames personalization in developmental terms rather than novelty terms. The broader idea is that seeing themselves in a story can help children engage more deeply, remember more, and connect more strongly to the reading moment. But if that is the benefit, then careful design becomes even more important. Its Research page and Methodology & Safety page work best when read together for exactly that reason.

That is the right way to talk about AI safety for kids: not as magic, not as a buzzword, and not as an empty promise — but as a design responsibility.

Final answer

So, are AI storybooks safe for kids?

They can be.

But only when they are built with clear child-safe guardrails: age-appropriate language, emotional pacing, content limits, careful handling of sensitive topics, privacy-conscious personalization, and quality control that keeps the reading experience calm and coherent.

That is the standard worth using.

A children’s story powered by AI should not ask parents to trust the technology blindly. It should earn trust by showing how the system was designed, what it avoids, and what kind of reading experience it is trying to protect.

That is the difference between “AI for content” and AI for families.

If you want the product-level explanation of how the experience works from story choice to delivery, start with How MIBOOKO Works. If you are more interested in MIBOOKO’s continuing, chapter-by-chapter format, explore MIBOOKO Storybook.